A.1: Case Studies

The independent case studies are a sequence of individual analytical assignments designed to develop critical, technical, and ethical literacy in contemporary AI systems. Rather than assessing tool proficiency or output quality in isolation, the case studies focus on judgement, agency, and the ability to reason about how AI systems behave, where risks arise, and where responsibility ultimately lies.

Each case targets a distinct layer of the AI stack. Taken together, they move from questions of responsibility and authorship, through system mechanics, to data and dataset risk. Students are expected to work with concrete systems, artefacts, or documented processes, and to ground their analysis in observable behaviour rather than abstraction or speculation.

Before beginning any case study, students must review the general structure, expectations, and submission requirements outlined here.

Case Study Structure

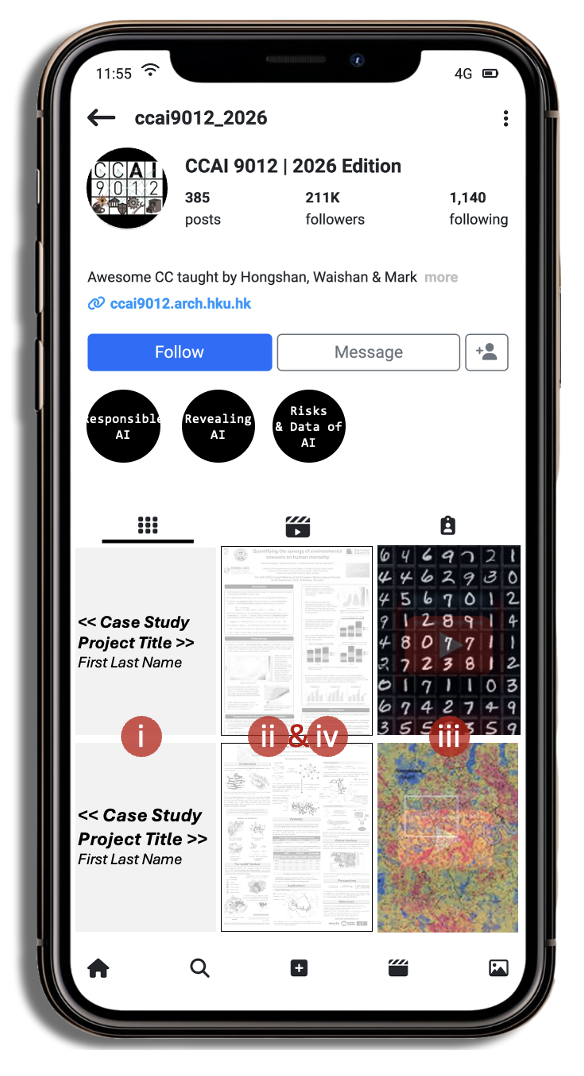

Each case study consists of four required components:

- (i) Annotation: A written analytical reflection that documents intent, decisions, and reasoning. This is where claims must be made explicit and supported.

- (ii) Artefact: The primary output of the case study. This may be visual, written, computational, or hybrid, depending on the case. The artefact demonstrates engagement with the AI system under study.

- (iii) Vignette: A concise short-form video presentation, no longer than 90 seconds, communicating the core argument, findings, and insights of the case study. (Formatting guidelines will be released soon.)

- (iv) Evidence: Concrete documentation of assignment-specific process required for some case studies.

Students are expected to begin by reviewing the general case study outline [link], which sets out shared structure, submission requirements, and assessment criteria. Each case study then provides a project-specific outline that refines these expectations in relation to its particular focus. Submissions should follow the general outline unless explicitly superseded by case-specific instructions.

| Case | Title | Brief Description |

|---|---|---|

| A.1.1 | Responsibility & AI | Examines responsibility and authorship in generative AI through the deliberate production of a non-default artefact. Students document how they resisted model defaults, navigated legal, ethical, regulatory, or creative risks, and identified clear boundaries between machine output and human judgement, supported by evidence of divergence. |

| A.1.2 | Mechanics of AI | Investigates how a specific technical aspect of an AI system operates, such as model architecture, training approach, or inference behaviour. Students analyse how this mechanism shapes system strengths, limitations, and failure modes, linking observed behaviour to underlying design choices rather than surface-level outputs. |

| A.1.3 | Datasets & Risks of AI | Analyses a dataset or data-generation pipeline used in AI systems, focusing on how data collection, labelling, filtering, or curation decisions influence outputs and introduce risk. Emphasis is placed on bias, representational gaps, and downstream consequences, along with reasoned proposals for mitigation or improvement. |

The contents will be archived online to showcase your extrodinary work.

Please prepare your case study using the template [here].

This document contains the: - submission instructions (via Google Form link indicated in Moodle) - template for formatting your case study for online archival as a single pptx submission.